A question from the 1700s and an Instagram reel about the Andromeda Galaxy explain Organizations struggle to trust AI.

We recently heard of an AI building in three hours what a team had been working on for three months. By now, everyone knows AI can do this. Yet the moment felt uncomfortable. Not because it was shocking, but because someone said it out loud, that AI did it.

That discomfort is not an AI problem. It is a very old human problem.

In 1710, George Berkeley posed a question. If a tree falls in a forest and no one is around, did it make a sound? At its core, the question is simple. Can something be accepted as true without validation?

Most people have heard it and moved on. I did too, until an Instagram forward connected it to something far more current.

The video described a thought experiment about travelling to the Andromeda Galaxy, about 2.5 million light years away. If someone could travel close to the speed of light, the journey might feel short to them. But by the time they returned, millions of years would have passed on Earth. Everyone they knew would be gone. Perhaps even the human species.

So, with no one left to hear the story or validate it, did the journey truly happen?

This is exactly where we are with AI.

We need validation. So even when the AI output is strong, we tweak it, rewrite it, or present it as our own intuition. It works, because it is now validated by human intelligence.

Without that validation, it feels uncomfortable to accept it as truth.

The tree fell. Loudly. Clearly. Undeniably. But without someone in the forest to acknowledge it, we hesitate to accept that it made a sound.

The companies moving ahead are not the ones who fully trust AI. They are the ones who have reduced the amount of validation required before acting. They test, they iterate, and they own the outcome, even when the work was not produced in the traditional way.

They do not wait for the forest to fill up.

They see a fallen tree, decide it made a sound, and move.

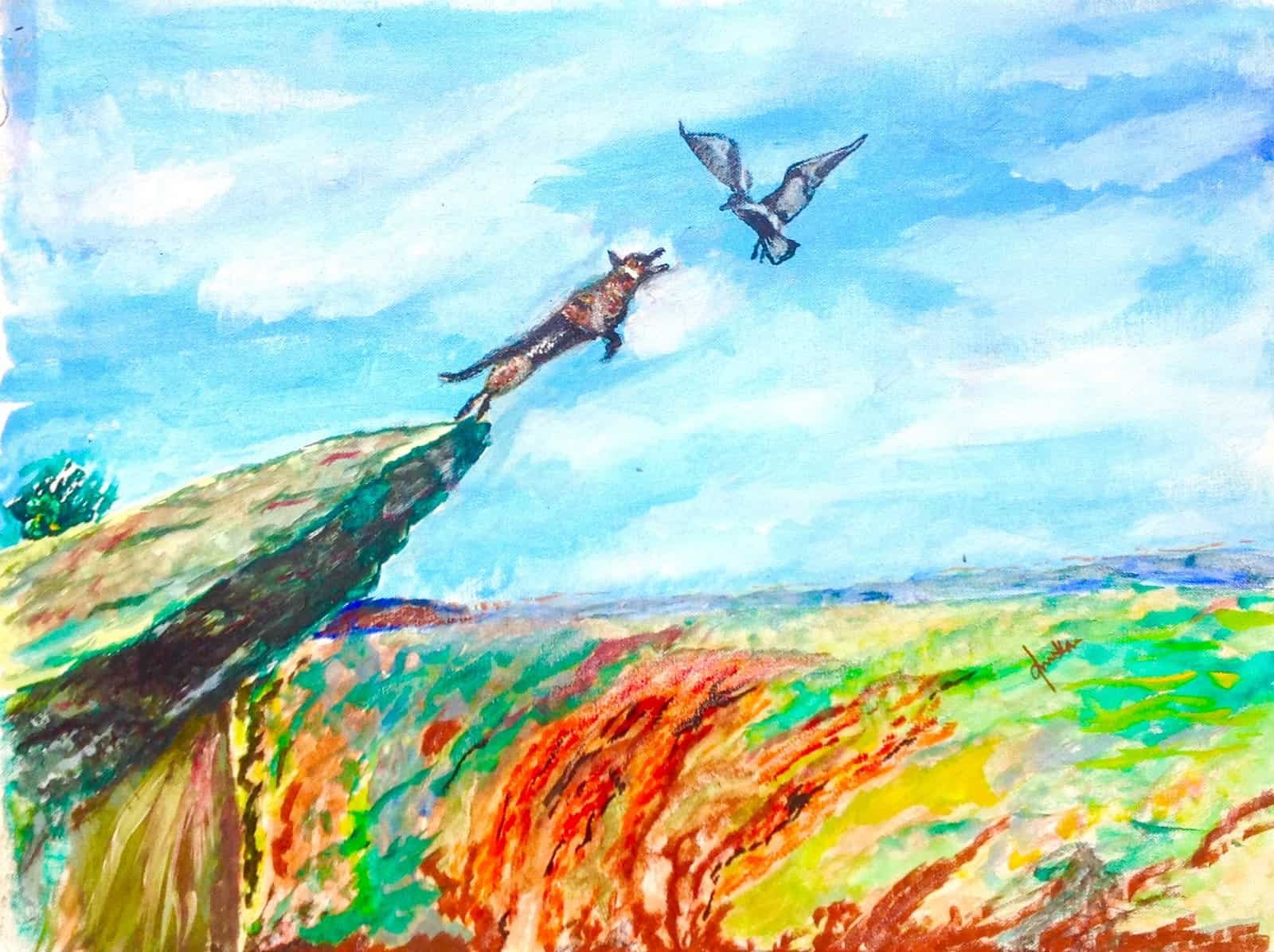

Note: This painting is the first oil work I created 10 years ago, titled “The edge is not the end.” The fox does not wait for validation of its strategy. It sees the bird and jumps. Ten years later, the world has changed, but the instinct to move forward has not. Wait for validation and AI will move ahead without you. Take the leap. The edge is often the beginning.

Leave A Comment